Chain-of-Thought (CoT) prompting is a technique where asking questions, rather than issuing direct instructions activates a model’s full internal reasoning pathway.

The key insight from the original framing is that instructions skip steps 1–3, jumping straight to synthesis, while questions force the model to work through the entire reasoning chain.

What Chain-of-Thought Actually Is

Chain-of-Thought prompting is a method that improves LLM performance by encouraging the model to generate a series of intermediate reasoning steps before arriving at a final answer, rather than jumping directly to a conclusion.

It was first formally studied by Google researchers, who found that CoT reasoning abilities emerge naturally in sufficiently large language models (~100B+ parameters) and can be triggered by the right prompt structure.

Why Questions Trigger Deeper Reasoning

When you ask a question, you expand the model’s working context. Instead of jumping from input to output, the model generates intermediate thoughts, and each step becomes part of the context for producing the next one, creating a true “chain” of logic.

Current instruction-tuned models actually incorporate CoT explanation data during post-training, meaning the reasoning pathway is baked in and the right prompt just unlocks it.

By contrast, a direct instruction compresses that process, often producing shallower output.

The Four-Step Reasoning Pathway Explained

Here’s what the model is doing internally during each step:

- Analyzes requirements — Decomposes the question into its core components, identifying what type of reasoning is needed (factual, evaluative, causal, etc.)

- Considers multiple frameworks — Explores different approaches or mental models that could apply, similar to how a human might brainstorm multiple angles

- Evaluates trade-offs — Compares frameworks against each other, weighing evidence, relevance, and potential errors before committing to a direction

- Synthesizes a nuanced answer — Combines the best elements from the prior steps into a coherent, high-quality response

How to Deliberately Activate It

You don’t need to rely on questions alone, you can explicitly trigger CoT reasoning with specific prompt techniques:

- “Let’s think step by step” — A simple phrase that has been shown to quadruple accuracy on some benchmarks (from 18% to 79% on one math dataset)

- Few-shot exemplars — Provide examples that show reasoning steps, not just answers, which guides the model to replicate that process

- Sub-question chaining — Break your prompt into sequential sub-questions, feeding each answer into the next prompt

- Tree-of-Thought (ToT) — An advanced extension where the model explores multiple reasoning branches simultaneously before choosing the best path, modeled as a search tree

The Core Practical Takeaway

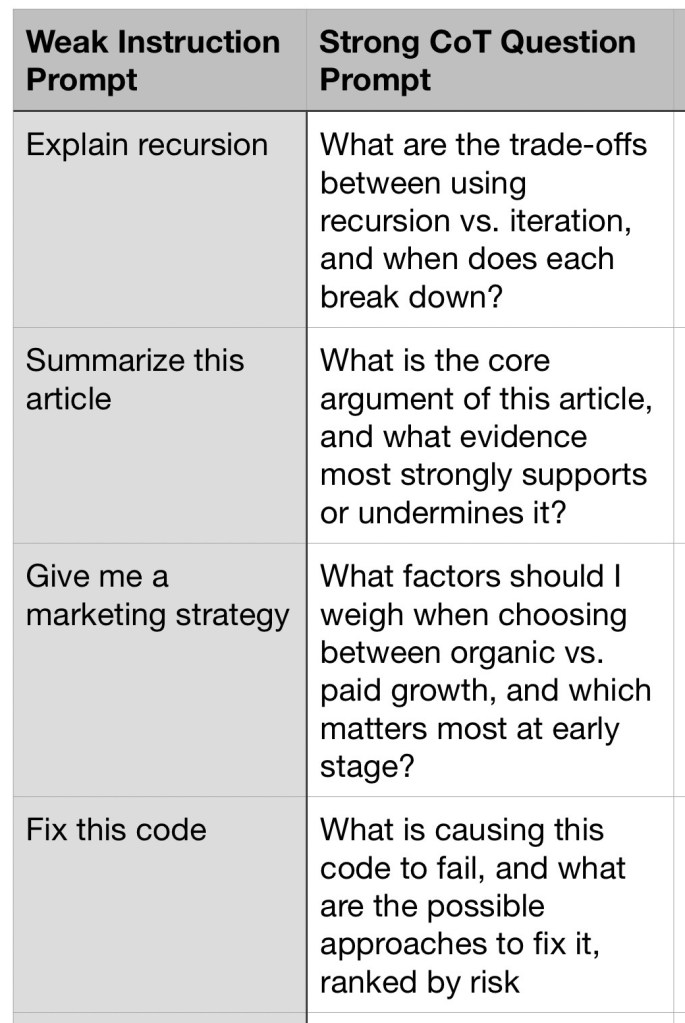

The fundamental difference between a weak and a strong prompt is whether it forces the model to earn its answer.

Prompts like “What are the trade-offs between X and Y?” or “Walk me through the reasoning behind…” force all four steps, while “Tell me about X” risks skipping the analytical work entirely.

Structuring prompts as genuine reasoning challenges and not information requests consistently produces more accurate, nuanced, and reliable outputs.

Here are concrete, practical examples of prompts using the training-time CoT technique, organized by approach and use case.

The Core Principle: Questions Over Instructions

The fundamental switch is simple. Instead of commanding an output, you frame prompts to trigger the reasoning pathway. Here’s the direct contrast:

Zero-Shot CoT Triggers

These are single-phrase additions that unlock the full reasoning chain with zero examples needed:

- “Let’s think step by step.” — The classic trigger; shown to dramatically improve accuracy on reasoning benchmarks

- “Before answering, explain your thinking.”

- “Walk me through the reasoning before giving a conclusion.”

- “Show your work at every stage.”

- “Think aloud before answering.”

Structured CoT Prompt Formula

The most reliable CoT prompt structure follows this pattern:

[Your question or scenario]

Break this down step by step:

- First, consider [Aspect A]

- Then, evaluate [Aspect B]

- Identify the key trade-offs

- Give your final recommendation with reasoning

Real example for content work:

“I’m writing a newsletter piece about AI in healthcare. Break this down step by step:

- What angle will resonate most with a general audience?

- What are the strongest counterarguments I should address?

- What are the trade-offs between being accessible vs. technically accurate? 4. Recommend a headline and opening structure.”

Question-Analysis Prompting (QAP)

A research-backed variant where you ask the model to restate and analyze the question before answering. This has been shown to improve accuracy on reasoning tasks because it forces the model to correctly interpret ambiguous intent before committing to a response:

Before answering, restate this question in your own words and identify any ambiguities or assumptions embedded in it.

Then answer: [Your question]

Few-Shot CoT: Showing Reasoning by Example

When you want the most reliable output, provide one or two examples that show the reasoning process, not just the final answer:

Example:

Q: Should a startup prioritize SEO or paid ads first?

A: First, consider the startup’s cash runway — SEO takes 6–12 months but compounds; paid ads deliver fast results but drain budget.

Second, consider team skills.

Third, evaluate the competitive keyword landscape.

Conclusion: SEO-first if runway > 12 months, paid ads if speed to revenue is critical.

Now apply the same reasoning to:

Q: Should our blog prioritize long-form guides or short news posts?

When NOT to Use CoT Prompting

CoT adds overhead and isn’t always better — skip it for:

- Simple factual lookups (“What year did X happen?”)

- Basic translations or typo corrections

- Open-ended creative writing without constraints

- Quick formatting or template-filling tasks

The key rule: use CoT whenever the quality of the answer depends on the quality of the reasoning that precedes it.