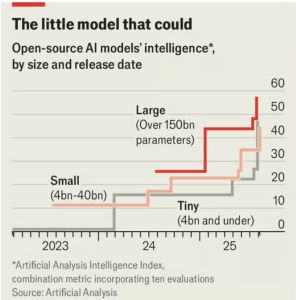

Is Faith in the supposed “God-like” powers of large language models (LLMs) waning as businesses and developers shift their focus to smaller, more nimble alternatives. This trend suggests a significant change in the AI landscape, with important implications for both the tech giants at the forefront and those, like Apple, that have taken a more cautious approach.

Perception Shift: From Hype to Utility

LLMs such as OpenAI’s GPT family were likened to the iPhone revolution in their early days, promising transformational capabilities. However, as the field matures, new model releases like GPT-5 are drawing less excitement—on par with the routine updates seen in annual smartphone launches. The pace of improvement at the AI frontier has slowed, diminishing the sense of groundbreaking innovation.

Rise of Small Language Models (SLMs)

A key driver behind waning enthusiasm is the rapid rise of smaller language models (SLMs), which are increasingly preferred in the corporate sector. SLMs offer several advantages:

- Cost-effectiveness: They are cheaper to train and deploy than massive, all-purpose LLMs whose full breadth of knowledge is often unnecessary for practical applications.

- Customization: Companies can tailor SLMs to their specific needs, resulting in more relevant and efficient tools.

- Performance: For many enterprise functions, such as HR chatbots or workflow automation, SLMs provide sufficient capability without excess computational overhead.

- Deployment Flexibility: SLMs can run on-premises just as easily as in the cloud, and thrive in energy-sensitive environments like smartphones, vehicles, and robots.

Technical Catch-Up and Apple’s Perspective

Thanks to advances in training techniques, especially “distillation,” where large models teach smaller ones, SLMs are rapidly catching up to their giant predecessors. For example, Nvidia’s 9-billion-parameter Nemotron Nano recently outperformed Meta’s 40-times-larger LLAMA model in multiple benchmarks.

This trend benefits companies like Apple, who have prioritized efficient, device-based AI over investing billions in cloud-based LLMs. A shift toward small, powerful models validates Apple’s approach, potentially giving it an edge in creating user-friendly, privacy-respecting, on-device AI features.

Why This Matters

- The era of LLM “mythology” may be fading: Blind faith in massive, central models appears to be giving way to more pragmatic, use-case-driven development.

- AI democratization is accelerating: As SLMs become more capable and affordable, innovation and experimentation are within reach for more organizations.

- Tech giants must adapt: For companies heavily invested in ever-larger models, nimble competitors and device-makers may seize ground by deploying targeted, efficient AI solutions.

In Summary

The shift from grand, all-knowing LLMs to specialized, efficient SLMs marks a maturing AI industry and the sobering of early euphoria. For pragmatic device-makers like Apple, this evolution in faith could represent not just good news—but a strategic turning point.

From a paywalled article in The Economist